You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Nvidia GeForce RTX 5070 review: No, it’s not “4090 performance at $549”

- Thread starter JournalBot

- Start date

I suppose that means the GPU question for my current build isn't so much green or red, then, but 7900XT or 9070XT.

Upvote

124

(125

/

-1)

Cyrano4747

Wise, Aged Ars Veteran

It's all going to come down to how good AMD's ray tracing and upscaling are. Those features are where nvidia has been killing it, to the point that a pure raster-to-raster comparison doesn't really tell the tale any more. Doubly so now that we have games flat out requiring RT, and if it's going to be a requirement you'd like it to be good.

Upvote

-9

(38

/

-47)

Meant with no disrespect to Andrew at all:

Yeah, no shit. Or more accurately, loads of it, as Nvidia was shoveling to shape a fabulous turd sculpture and pretend it would supersede a 4090 at one third the price. "It's just as fast if you don't run the same things and the things you do run you do them differently!" Uh huh. My frame rates are a lot higher at 1024x768, too. What's your point, Nvidia?

Nvidia GeForce RTX 5070 review: No, it’s not “4090 performance at $549”

Yeah, no shit. Or more accurately, loads of it, as Nvidia was shoveling to shape a fabulous turd sculpture and pretend it would supersede a 4090 at one third the price. "It's just as fast if you don't run the same things and the things you do run you do them differently!" Uh huh. My frame rates are a lot higher at 1024x768, too. What's your point, Nvidia?

Upvote

124

(128

/

-4)

Seems like it would be a worthy upgrade from my 2070... at €550... but I put the odds of getting one under 1k as basically non-existent which makes the whole idea moot.

Upvote

45

(49

/

-4)

Ugh I hate review embargoes, because it would be useful to compare the 5070 versus the 9070 NOW instead of a couple of days later.

I'm really hoping that AMD can catch Nvidia sleeping here and make up some good ground. AMD's done it already with Intel in the CPU space, and Nvidia is really banking hard on supplanting high card prices with AI, so here's to hoping.

I'm really hoping that AMD can catch Nvidia sleeping here and make up some good ground. AMD's done it already with Intel in the CPU space, and Nvidia is really banking hard on supplanting high card prices with AI, so here's to hoping.

Upvote

79

(82

/

-3)

fuzzyfuzzyfungus

Ars Legatus Legionis

Did you at least get as many ROPs as advertised; or are some of those generative as well?

Upvote

110

(112

/

-2)

Cyrano4747

Wise, Aged Ars Veteran

Ugh I hate review embargoes, because it would be useful to compare the 5070 versus the 9070 NOW instead of a couple of days later.

I'm really hoping that AMD can catch Nvidia sleeping here and make up some good ground. AMD's done it already with Intel in the CPU space, and Nvidia is really banking hard on supplanting high card prices with AI, so here's to hoping.

Yeah, it really really sucks when you basically only have a day to get the card, if that.

FWIW the Gamers Nexus review is doing a lot of wink wink nudge nudge by comparing it to some AMD cards that they are heavily implying the 9070 roughly equates. If the point they're making about the 9700 XT Hellhound is true the AMD cards are going to be pretty predictably heads and shoulders better in raster and slightly to significantly worse at RT depending on title.

I still want to see apples to apples comparisons, though, especially with RT because that's where the real test is for AMD this time around.

Last edited:

Upvote

43

(45

/

-2)

I'm not qualified to question the allegations of laziness due to a perceived lack of competition. But I'm wondering if we aren't just bumping up against diminishing returns for graphics technology such that generational improvements (that don't come from die-shrinks, more VRAM, and image processing tricks) are just not on the table anymore.The RTX 5070 feels like the kind of product you make when you're not particularly worried about what your competition is doing. The entire 50-series has sort of felt like that so far, between the astronomically high price of the RTX 5090 and the so-so performance improvements for the 5080 and 5070 Ti. But it's different for the RTX 5070 because AMD actually has an answer for it coming soon.

Upvote

40

(43

/

-3)

I suppose that means the GPU question for my current build isn't so much green or red, then, but 7900XT or 9070XT.

You should really look at the 9000 cards unless you find a deal on a new 7900 XT that's too good to refuse. The expected RT improvements and introduction of FSR4 make 7000 series cards at the the same raster performance and price a non-starter. ...for me.

Upvote

48

(49

/

-1)

I would almost welcome that. Modern games, at least, tend to be graphically extremely impressive but often lacking in so many other areas, it'd be interesting to see which way development would push if graphical improvements were suddenly off the table.I'm not qualified to question the allegations of laziness due to a perceived lack of competition. But I'm wondering if we aren't just bumping up against diminishing returns for graphics technology such that generational improvements (that don't come from die-shrinks, more VRAM, and image processing tricks) are just not on the table anymore.

Upvote

36

(39

/

-3)

(Apologies for the double post, that reply came in while I was typing the previous one.)You should really look at the 9000 cards unless you find a deal on a new 7900 XT that's too good to refuse. The expected RT improvements and introduction of FSR4 make 7000 series cards at the the same raster performance and price a non-starter. ...for me.

I already got my 7900XT, but it'll be well within the EU's two-weeks refund window when the 9070s drop. Current game plan is to see if I can get a Sapphire or XFX one for reasonably close to the €720-ish that the 7900 cost, then return that one. I was, perhaps foolishly, banking on AMD rolling low for this generation.

Upvote

6

(6

/

0)

Mario_van_Pipes

Wise, Aged Ars Veteran

Per the previously mentioned Gamers Nexus editorial on AMD's 9700-series announcement, they have the opportunity to pull the tiger's tail here. However, I was not aware that the CPU and GPU teams are still effectively antagonistic to each other. Let's hope they managed to patch things up, because once they do it's game on for AMD in the high-end market again.Ugh I hate review embargoes, because it would be useful to compare the 5070 versus the 9070 NOW instead of a couple of days later.

I'm really hoping that AMD can catch Nvidia sleeping here and make up some good ground. AMD's done it already with Intel in the CPU space, and Nvidia is really banking hard on supplanting high card prices with AI, so here's to hoping.

Upvote

-7

(2

/

-9)

RickRoyLeonPrisZhoraRacha

Ars Praetorian

As long as AMD doesn't drop the ball on price!Yeah, it really really sucks when you basically only have a day to get the card, if that.

FWIW the Gamers Nexus review is doing a lot of wink wink nudge nudge by comparing it to some AMD cards that they are heavily implying the 9070 roughly equates. If the point they're making about the 9700 XT Hellhound is true the AMD cards are going to be pretty predictably heads and shoulders better in raster and slightly to significantly worse at RT depending on title.

I still want to see apples to apples comparisons, though, especially with RT because that's where the real test is for AMD this time around.

Upvote

-9

(2

/

-11)

Post content hidden for low score.

Show…

As someone who only dips into graphics card technology every five years or so when figuring out what I need to replace my prior card, I really wish either Nvidia or AMD would choose a different naming scheme for their GPUs.

Either way, the 3080 I managed to pick up at retail price a few months into COVID is still doing quite well, and I doubt I'll need to replace it any time soon. I know bigger number is better, but my eyes can no longer tell the difference, if they ever could.

Either way, the 3080 I managed to pick up at retail price a few months into COVID is still doing quite well, and I doubt I'll need to replace it any time soon. I know bigger number is better, but my eyes can no longer tell the difference, if they ever could.

Last edited:

Upvote

30

(34

/

-4)

My 3070 is starting to get a bit old, I'll probably replace it with a 9070 xt if I can manage to get one. Which will be the first non-Nvidia card I've bought for myself in about 20 years.

Upvote

24

(25

/

-1)

David Mayer

Wise, Aged Ars Veteran

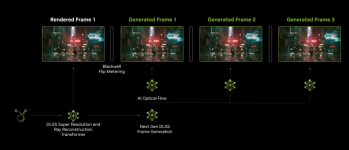

Pedantry against the trend: "interpolated frames", these are extrapolated frames, interpolation draws from the data on both sides of the created data point (the frame or frames before and the frame or frames after), extrapolation draws from the data on only one side of the data point (the frame or frames before or after) not both. This tech extrapolates as it completes generation of the new frame(s) before subsequent ones exist.

All that said, I'm late to the party and there's no hope of overriding this linguistic trend, so, sucks to be a pedant I guess.

Edit, I'm wrong, and nvidia is actually interpolating, according to this video at 12:58 they are analyzing two frames and generating frames in between them. I made the mistake because I assumed they wouldn't just throw away latency to get these results.

All that said, I'm late to the party and there's no hope of overriding this linguistic trend, so, sucks to be a pedant I guess.

Edit, I'm wrong, and nvidia is actually interpolating, according to this video at 12:58 they are analyzing two frames and generating frames in between them. I made the mistake because I assumed they wouldn't just throw away latency to get these results.

there's really you know two uh rendered frames that that were analyzing in order to create a series of of frames in between

Last edited:

Upvote

25

(38

/

-13)

I, for one, still only care about 1:1 raster performance. Some games may require RT, but until its forced on, I won't ever use it. I always dial back all the effects anyway, because I find them distracting at best, and give me headaches at worst.

In that light, and because I am forever a supporter of an underdog (since the 486DX-2 50MHz), I will stick with AMD.

I recently purchased an RX 7600 XT to replace my RX 580, but, while it was a big improvement in frame rates, I returned it to wait and see what the RX 9060 will look like. Ive never spent more than $250 on a GPU, and I hope I never will. This arbitrary inflation has gotten way out of hand.

Edit: typo

In that light, and because I am forever a supporter of an underdog (since the 486DX-2 50MHz), I will stick with AMD.

I recently purchased an RX 7600 XT to replace my RX 580, but, while it was a big improvement in frame rates, I returned it to wait and see what the RX 9060 will look like. Ive never spent more than $250 on a GPU, and I hope I never will. This arbitrary inflation has gotten way out of hand.

Edit: typo

Upvote

13

(26

/

-13)

This is similar to my buying habits - except I've doubled your budget. I got lucky and scooped up a 3080ti when the crypto market cratered and it was claimed to be NIB for $600.I recently purchased an RX 7600 XT to replace my RX 580, but, while it was a big improvement in frame rates, I returned it to wait and see what the RX 9060 will look like. Ive never spent more than $250 on a GPU, and I hope I never will. This arbitrary inflation has gotten way out of hand.

Edit: typo

There is no way in hell I'm dropping four figures on a 10-15% increase in performance along with AI generated frames. Also I will not support the scarcity markets and second hand scalpers - they can all go DIAF.

Edit: four figures before the period. facepalm

Last edited:

Upvote

12

(14

/

-2)

After the recent news of NVIDIA shipping GPUs missing ROPs and getting called out for it... I'm leaning heavily on an all AMD platform for my next generation of gaming computer (probably in two or three years... lol)My 3070 is starting to get a bit old, I'll probably replace it with a 9070 xt if I can manage to get one. Which will be the first non-Nvidia card I've bought for myself in about 20 years.

Upvote

8

(9

/

-1)

You should source your graph so we can at least judge the conditions it was derived from.

Upvote

37

(37

/

0)

While the stock market would scream and howl at that level of honesty and candor, it'd feel a lot more fair if Nvidia, if not saying the words, would at least price accordingly for the real-world market. Instead we get patented frame-smearing technology and told it's a mid-range powerhouse.I'm not qualified to question the allegations of laziness due to a perceived lack of competition. But I'm wondering if we aren't just bumping up against diminishing returns for graphics technology such that generational improvements (that don't come from die-shrinks, more VRAM, and image processing tricks) are just not on the table anymore.

Edit: typos, clarity

Last edited:

Upvote

18

(19

/

-1)

Same position I'm in with my 2080 (that's probably roughly equal to your 3070). I'm also looking to get a 9070xt. I'd get an nVidia if I could find a 5080 at $1,000 MSRP, but apparently that's not to be. Also, I'm a bit worried about that 12vhp(sp?) cable/interface fiasco.My 3070 is starting to get a bit old, I'll probably replace it with a 9070 xt if I can manage to get one. Which will be the first non-Nvidia card I've bought for myself in about 20 years.

Upvote

5

(7

/

-2)

It's from Hardware Unboxed/Techspot, and pretty misleading considering they're using MSRP. The 7900xt has been way under MSRP and the 5000 series cards from Nvidia are vaporware at MSRP.You should source your graph so we can at least judge the conditions it was derived from.

ETA and shows the 7800xt is better light considering it's been around $550 for the last month or so

Upvote

36

(36

/

0)

Actually I think pure raster-to-raster comparison is more important then ever. We need to strip out the artificial frames and look at the raw power of these cards head-to-head. If card A beats card B with no frame gen, AI, magic, etc then I bet its going to offer a better experience then card B AFTER you add the add the fluff...It's all going to come down to how good AMD's ray tracing and upscaling are. Those features are where nvidia has been killing it, to the point that a pure raster-to-raster comparison doesn't really tell the tale any more. Doubly so now that we have games flat out requiring RT, and if it's going to be a requirement you'd like it to be good.

Upvote

24

(25

/

-1)

What are you talking about? The new 9070 will fit in just fine with my Radeon 9500 from a couple decades ago.As someone who only dips into figuring out which card to buy every five years or so, I really wish either Nvidia or AMD would choose a different naming scheme for their GPUs.

Upvote

22

(23

/

-1)

Same position I'm in with my 2080 (that's probably roughly equal to your 3070). I'm also looking to get a 9070xt. I'd get an nVidia if I could find a 5080 at $1,000 MSRP, but apparently that's not to be. Also, I'm a bit worried about that 12vhp(sp?) cable/interface fiasco.

I still have a cap of around $500-$600 that I'll pay for a video card personally. $1000 is a difficult pill for me to swallow.

Upvote

27

(27

/

0)

Im sorry... HOW much do you think these retail for?There is no way in hell I'm dropping six figures on a 10-15% increase in performance along with AI generated frames. Also I will not support the scarcity markets and second hand scalpers - they can all go DIAF.

Six figures refers to a number that has six digits, specifically any amount between $100,000 and $999,999.

Last edited:

Upvote

34

(35

/

-1)

Good point. That's also a very attractive benefit. nVidia dropping the ball on video cards to concentrate on the AI market may make AMD the gaming leader over the next few years.I still have a cap of around $500-$600 that I'll pay for a video card personally. $1000 is a difficult pill for me to swallow.

Upvote

0

(1

/

-1)

macosandlinux

Ars Tribunus Militum

* When compared at 640x480 on the RTX 5070."4090 performance at $549.*"

Upvote

12

(13

/

-1)

unshavenyak

Ars Scholae Palatinae

As someone who has been out of the gaming PC building arena since the 5700-era, I can't believe how shitty the market is now. Not just for PCs, but also consoles. I can't believe you need a PS5 Pro to run MH: Wilds at a capped 60 fps using FSR1. What the hell happened?

Upvote

12

(14

/

-2)

I've been gaming since the first Atari console, so I'm kind of used to things progressing bit by bit. As far as the current market? I blame coin mining, then covid, and now AI. nVidia is currently making most of their money off AI now.As someone who has been out of the gaming PC building arena since the 5700-era, I can't believe how shitty the market is now. Not just for PCs, but also consoles. I can't believe you need a PS5 Pro to run MH: Wilds at a capped 60 fps using FSR1. What the hell happened?

From

View: https://www.reddit.com/r/pcmasterrace/comments/1awtso6/nvidia_made_29b_from_gaming_last_quarter_vs_184b/

Upvote

31

(31

/

0)

ChefJeff789

Ars Tribunus Militum

Anything using the 12VHPWR connector this gen should be avoided, period. I don't care how much wattage it's carrying - failing to current-balance power delivery across a 16-pin power cable is insane and should be grounds for a successful, painful lawsuit. Consumers need to soundly reject this nonsense.

Upvote

34

(36

/

-2)

I thought I was in the same boat, and then Newegg magically had availability on an Asus 5070Ti that I plunked a cool gurr down for.I still have a cap of around $500-$600 that I'll pay for a video card personally. $1000 is a difficult pill for me to swallow.

Kinda puked in my mouth when I completed checkout, but also coming from an RTX 2070 the change (and performance upgrade) has been completely worth it.. loosely used term

Upvote

-5

(4

/

-9)

Rob_Arctor

Seniorius Lurkius

This tech (DLSS 4 MFG) does NOT extrapolate, it still very much interpolates as mentioned by Nvidia's own VP of Applied Deep Learning Research Brian Catanzaro :Pedantry against the trend: "interpolated frames", these are extrapolated frames, interpolation draws from the data on both sides of the created data point (the frame or frames before and the frame or frames after), extrapolation draws from the data on only one side of the data point (the frame or frames before or after) not both. This tech extrapolates as it completes generation of the new frame(s) before subsequent ones exist.

All that said, I'm late to the party and there's no hope of overriding this linguistic trend, so, sucks to be a pedant I guess.

View: https://youtu.be/uyxXRXDtcPA?t=768

There are plenty of deplorable linguistic trends out there, this is not one of them.

Upvote

19

(19

/

0)

David Mayer

Wise, Aged Ars Veteran

This tech (DLSS 4 MFG) does NOT extrapolate, it still very much interpolates as mentioned by Nvidia's own VP of Applied Deep Learning Research Brian Catanzaro :

View: https://youtu.be/uyxXRXDtcPA?t=768

There are plenty of deplorable linguistic trends out there, this is not one of them.

Can you please link the relevant timestamp, I'm not going through a 30 minute video for this.

Edit1: Took a quick skim, from your video, a graphic showing extrapolation:

Edit2: Why TF would you interpolate anyway, that would deliberately add latency.

Edit3: This was driving me a little nuts, I found the timestamp, 12:48, thank goodness for searchable transcripts.

there's really you know two uh rendered frames that that were analyzing in order to create a series of of frames in between

In the future please include a timestamp and a quote in your video citations.

Last edited:

Upvote

-4

(16

/

-20)

BobbyBobberson

Ars Scholae Palatinae

You should source your graph so we can at least judge the conditions it was derived from.

View: https://youtu.be/qPGDVh_cQb0?si=qQVHLojzyZ6KmgsK

Upvote

3

(5

/

-2)